Göran Kauermann is well renown researcher in the field of statistics. He is a full Professor of Statistics in Germany and, since 2011 he holds the Chair of Statistics in Economics, Business Administration and Social Sciences at Ludwig-Maximilians-Universität München (LMU). He’s also the Spokesperson of the Elite Master's Programme in Data Science at LMU.

-

In memoriam: Arturo Muga

-

Violeta Pérez Manzano: «Nire ahotsa ijito bakar batengana iristen bada eta horrek inspiratzen badu, helburua bete dut»

-

In memoriam: German Gazteluiturri Fernández

-

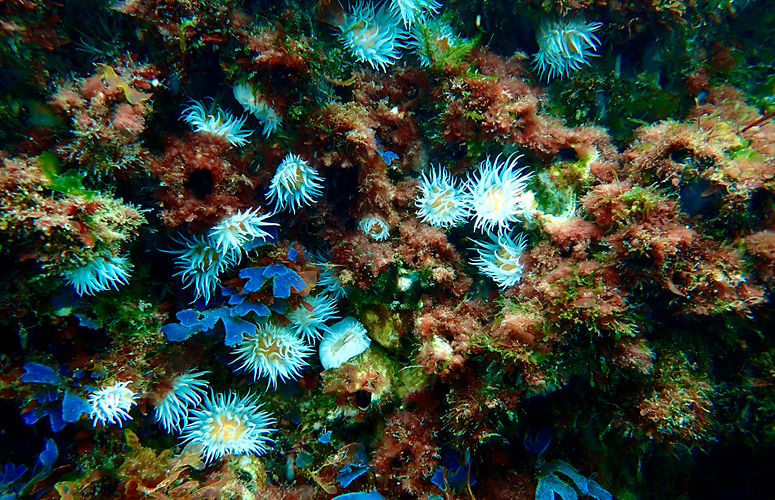

Itsasoaren gainazalaren tenperatura-igoerak aldaketa sakonak eragin ditu makroalgen komunitateetan

-

Azukrea eta edulkoratzaileak. Zer jakin behar dut?

Göran Kauermann, full Professor of Statistics

«Statisticians aim to answer the question of what’s going on while Data Analytic tools from Computer Science focus on what happens next»

- Interview

First publication date: 22/02/2018

On Friday, February 23rd, he will give a seminar on Applied Statistics organized by the Applied Statistics Group of the Basque Center for Applied Mathematics (BCAM) and the UPV/EHU Departments of Applied Economics III (Econometrics and Statistics) and Applied Mathematics, Statistics and Operations. The session, called “Statistical Models for Network Data Analysis – A Gentle Introduction”, is part of a series of seminars that aim to serve as a common ground and a meeting place for discussion and dissemination of Statistics and its potential applications.

We’ve had the chance to interview Kauermann before his talk on Friday and here’s what he’s told us about Statistics, Big Data and Network Data Analysis:

Thanks to technological advances the amount of data gathered nowadays has increased tremendously. How can statistics help analyse it?

The avalanche of data and the challenges of the Big Data era have increased the reputation of statistics. Statistical reasoning and statistical thinking are important, well beyond the classical fields of statistics. This has led to the new scientific field of Data Science. Though the exact definition of Data Science is not consolidated yet, in my view, it is an intersection of statistics and computer science. The two disciplines approach data analysis from two different angles. While statisticians aim to answer the question “what’s going on”, data analytic tools from computer science (like machine learning) focus on prediction, that is they tackle the question “what happens next”. Both approaches are necessary and useful, dependent on the question and problem. In other words, yes, statistics can and should help to cope with the digital revolution, but it can only be successful if it goes hand in hand with computer science in the new direction of Data Science. Having said that I also stress that the traditional fields of statistics (medical statistics, econometrics, etc.) remain important at the same time.

Is it easier to work with big amounts of data or does it require more complex methods?

Certainly, statistics is challenged by massive data, and some of our routines just don’t work in Big Data. But I don’t think it requires more complex methods. Looking back, statistical methods were always limited by computational power and flexibility, beginning with simple matrix computation in the 50s and now more complex methods now in the big data era. However, some old, traditional statistical and computational ideas experience a resurrection. Tensor methods and linear algebra approximations (e.g. singular value decomposition) are very useful. Instead of analyzing all data one pursues some approximations. The mathematical concept of sufficiency gets a new meaning. Instead of working with all data, one just calculates sufficient statistics, which works even with large-scale data. And after all, sampling appears in new light, why analyzing all peta byte of data instead of just drawing a sample from the data. These are not new or more complex methods, but they are adapted and amended to new and more complex data constellations.

You work in Network Data Analysis. How would you explain what that is?

Network data are very simple in structure. A set of actors (nodes) interact with each other (edges). The interaction can be friendship, which is just a zero/one coding (1 = friendship between two nodes, 0 = no friendship), or it can be a valued interaction, e.g. a trade flow between actors. And even though the structure is simple, the modelling of such data is difficult if one assumes that the existence of an edge depends on the existence of other edges. That is to say, if the edges, considered as random variables, are mutually dependent. The easiest form to understand this is in a friendship network. The chance of two actors to build up friendship might depend on the number friends these two actors have in common. In other words, it depends on other edges. Such mutual dependence makes the modelling exercise difficult.

What kind of network data do you usually analyse? Could you give us any examples?

The classical field of network data analysis comes from social science, where networks represent friendship networks. However, the models and methods are not limited to this kind of network. Other examples are trading network, interaction networks of scientists, flow networks, etc.

What kind of statistical models do you use to work with that sort of data?

The workhorse in statistical network data analysis is the Exponential Random Graph Model. It considers the network as a random matrix with 0/1 entries and models the probability of such matrix in the form of an exponential family distribution. This allows for some intuitive interpretation but suffers from numerical hurdles. These will be exemplified in the talk I’ll give on Friday.

More information about the seminar and its schedule can be found on BCAM’s website.